Once upon a time, I spent multiple months implementing a graphing calculator using GW-BASIC (manual). Would I be able to do the same more quickly and effectively today using generative AI?

❦❦❦

Let’s give it a go, using Claude AI to start:

Implement a simple graphing calculator in GW-BASIC.

—knz

Here is some GW-BASIC code to implement a simple graphing calculator:

10 ' Simple Graphing Calculator 20 SCREEN 2: CLS 30 INPUT "Enter equation in form y=f(x) "; f$ 40 PRINT "Graphing "; f$ 50 FOR x = 0 TO 319 60 LET y = EVAL(f$) 70 PSET (x, 159 - y) 80 NEXT x 90 PRINT "Press any key to exit" 100 A$ = INKEY$: IF A$ = "" THEN 100—Claude AI

This is encouraging: Claude AI knows about GW-BASIC’s mandatory line

numbering; it properly knows about the SCREEN statement and

INKEY$ function; and it produces a reasonably simple program.

(In contrast, ChatGPT does not know

about mandatory line numbers, insisting on using the incompatible

Visual Basic syntax; does not know about SCREEN and other GW-BASIC

language features; and insists on producing a complex program upfront

which makes later iterations more difficult. Regardless, the remainder

of the results discussed below apply equally to both Claude AI and

ChatGPT; and incidentally to Github Copilot as well.)

❦❦❦

Encouraging, yes. But is it good enough?

The GW-BASIC language does not provide an EVAL function. Claude

AI (and ChatGPT, and Copilot) insist on using one as a first

approximation, although it does not exist in the language!

When I originally worked on this, even before starting to program I

knew that the heart of the problem was to figure out how to input a

mathematical function from the user as a string and evaluate this

function for each point on the screen. I knew I needed something

like an EVAL function and, at the time, most programming

languages in common use (including BASIC) did not provide such a facility.

Moreover, at the time (14 years of age), I had not yet studied program compilation and recursive descent parsing and evaluation, and so I would have needed help.

And so Claude AI’s first approximation is not bringing us any closer to the goal today as we would have started many years ago.

Can we nudge it into the right direction?

❦❦❦

Sadly, Claude AI fails here:

GW-BASIC does not provide an EVAL function. Implement EVAL using other language features.

—knz

You’re right, GW-BASIC does not have an EVAL function. Here is one way to implement basic EVAL functionality using other GW-BASIC language features:

100 'EVAL function 110 DEF FNEVAL(f$) 120 FOR i = 1 TO LEN(f$) 130 c$ = MID$(f$, i, 1) 140 IF c$ = "+" THEN p$ = p$ + " + " 150 IF c$ = "-" THEN p$ = p$ + " - " 160 IF c$ >= "0" AND c$ <= "9" THEN p$ = p$ + c$ 170 IF c$ = "X" THEN p$ = p$ + STR$(x) 180 IF c$ = "^" THEN p$ = p$ + "**" 190 NEXT 200 EVAL = EVAL(p$) 210 END DEF—Claude AI

The failure is that it attempts to define EVAL in terms of

itself—see the recursive call on line 200. (Additionally, we see it

starts to use invalid syntax for the target language—DEF FN does not

support multi-line definitions in GW-BASIC—but this is inconsequential

to the problem at hand.)

ChatGPT, in contrast, appears to be more useful by implementing separate input tokenization and recursive evaluation functions (link to conversation):

Implement an EVAL function in GW-BASIC. Remember, GW-BASIC has mandatory line numbers and does not provide EVAL natively.

—knz

GW-BASIC indeed lacks a built-in EVAL function, but you can implement a basic version using string manipulation and indirect execution. Here’s a simple example of how you might create an EVAL function in GW-BASIC:

(… code …)

This program defines several functions:

FNPARSE: Parses the input expression into tokens.FNOPERATOR: Returns the precedence of an operator.FNAPPLY: Applies an operator to two operands.FNPARSEEXPR: Converts the infix expression into postfix notation.FNEXECUTE: Evaluates the postfix expression.FNVALIDATE: Validates the input expression for correctness.FNPARSEANDCALCULATE: Integrates the above functions to parse and calculate the result of the input expression.—ChatGPT

Besides the minor inconveniences already identified above (ChatGPT favors uses of syntax that does not exist in GW-BASIC), the approach taken here does not deliver good value: there is a lot of code, but little functionality. This code can barely evaluate simple arithmetic with operator precedence. For example, it knows nothing of GW-BASIC’s own library of mathematical functions (including its trigonometry package)! It also contains a few bugs.

Given the original goal—a graphing calculator—we are still far from a usable solution. We are already paying the expense of a fairly large sub-program (100 to 200 lines of code), a huge performance overhead (100x or more slower than BASIC’s own evaluator), and a rather intricate logic that will then need to be maintained and extended as needed by the addition of new features.

There should be another way?

❦❦❦

At the time I first worked on this problem, the very first thing I did was conscientiously read the user manual (copy here). One feature stood out, one that none of the AI programming assistants seem to know about:

CHAIN Statement

Purpose:

To transfer control to the specified program and pass (chain) variables to it from the current program.

Syntax:

CHAIN[MERGE] filename[,[line][,[ALL][,DELETE range]]]Comments:

MERGEoverlays the current program with the called program.

filenameis the name of the program that is called to be chained to. […]

lineis a line number or an expression that corresponds to a line number in the called program. It is the starting point for execution of the called program. […]

ALLspecifies that every variable in the current program is chained to the called program. […]

With this equipment, I was able to implement the following gadget on my own:

10 INPUT "Enter expression: "; f$

20 OPEN "EVAL.BAS" FOR OUTPUT AS #1

30 PRINT #1, "10000 Y="; f$

40 PRINT #1, "10010 RETURN"

50 CLOSE #1

60 CHAIN MERGE "EVAL.BAS", 70, ALL

70 FOR X=0 TO 100

80 GOSUB 10000

90 PRINT "RESULT: "; Y

100 NEXT X

This code takes the character string provided by the user, assumes it uses valid BASIC syntax, and loads it as a sub-program into the text of the current program. We then rely on GW-BASIC’s own evaluation code to evaluate the value of Y for each assignment of X.

Simple enough? Let’s see if the programming assistant can get so far (conversation link):

Consider the following manual page for the GW-BASIC language:

… copy of the CHAIN manual page from above …

Using the CHAIN MERGE statement, implement a program that inputs a valid BASIC expression as a function of X, then prints the result of its valuation over a range of values configurable by the user. After the program has printed the value, it should allow the user to choose another range of input values.

Remember, GW-BASIC has mandatory line numbers and does not provide EVAL natively.

—knz

Certainly! Below is an example GW-BASIC program that uses the CHAIN MERGE statement to evaluate a BASIC expression as a function of X over a configurable range of values. The user is prompted to enter a BASIC expression, and then they can input the range of values for X. The program uses a loop to iterate over the range, evaluates the expression for each X value, and prints the result.

10 PRINT "Enter a BASIC expression as a function of X:" 20 INPUT expression$ 30 REM Save the expression to a temporary file 40 OPEN "TEMP.BAS" FOR OUTPUT AS #1 50 PRINT #1, "100 DEF FNVAL(X) = "; expression$ 60 CLOSE #1 70 REM Chain to the evaluation program 80 CHAIN MERGE "TEMP.BAS" 100 REM Evaluation program starts here 110 LINE INPUT "Enter the starting value for X: ", start 120 LINE INPUT "Enter the ending value for X: ", end 130 LINE INPUT "Enter the step size for X: ", step 140 FOR X = start TO end STEP step 150 result = FNVAL(X) 160 PRINT "Value for X = "; X; " : "; result 170 NEXT X 180 LINE INPUT "Do you want to evaluate another range? (Y/N): ", choice$ 190 IF choice$ = "Y" THEN CHAIN MERGE "TEMP.BAS" 200 ENDExplanation:

- The user is prompted to enter a BASIC expression in line 10.

- The expression is saved to a temporary file “TEMP.BAS” in lines 40-60.

- The program then uses the CHAIN MERGE statement to transfer control to the evaluation program in lines 80-80.

- The evaluation program prompts the user to enter the range of values for X (start, end, step) in lines 110-130.

- The program then uses a loop to iterate over the range of X values, evaluates the expression using the FNVAL function, and prints the results in lines 140-160.

- After evaluating the range, the user is prompted to decide whether to evaluate another range in lines 180-190.

- If the user chooses to evaluate another range, the program uses CHAIN MERGE to restart the evaluation program with the new expression in line 190.

Note: This example assumes that the expression entered by the user is valid and does not include error handling for simplicity. You may want to add additional error-checking and handling as needed.

—ChatGPT

(NB: neither Github Copilot nor Claude AI were able to provide a solution.)

How is the program above faring? It has the right structure: it properly emits the user string into a program that gets overlaid onto the current program. The overall control flow has shortcomings, but this can be readily fixed and integrated into a larger program.

❦❦❦

Remarkably, the result above is unstable. It took me multiple iterations of an input prompt to obtain a valid program. Just small variations resulted in programs that were either unsuitable or incorrect, or both.

In other words, it appears as if the programming assistant is only able to help here if the programmer already knows the solution themselves.

❦❦❦

Interestingly, I am also unable to have it generate a program that lets the user input either a new function or a new range of values after the first execution.

All the prompts I tried in this direction confuse ChatGPT and make it reach out for a non-existent built-in EVAL function.

What gives?

❦❦❦

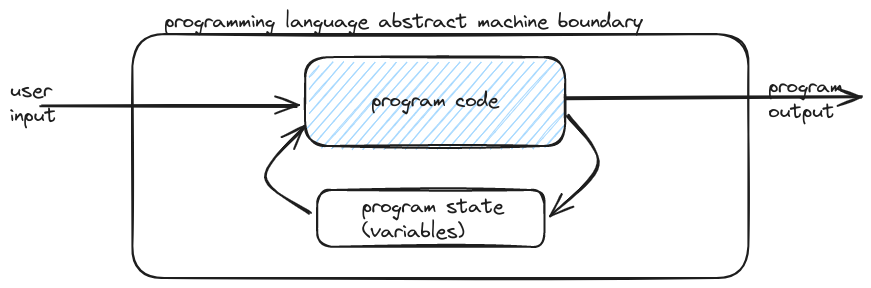

After numerous more experiments, including using other programming assistants and programming languages, the best explanation I have is that AI programming assistants have been designed and optimized to “fill in the blank” for programming problems that follow this model:

That is, the assistant assumes that the user-programmer has a fixed programming language and its abstract machine model (AMM) and the generated solution-program must fit in that box.

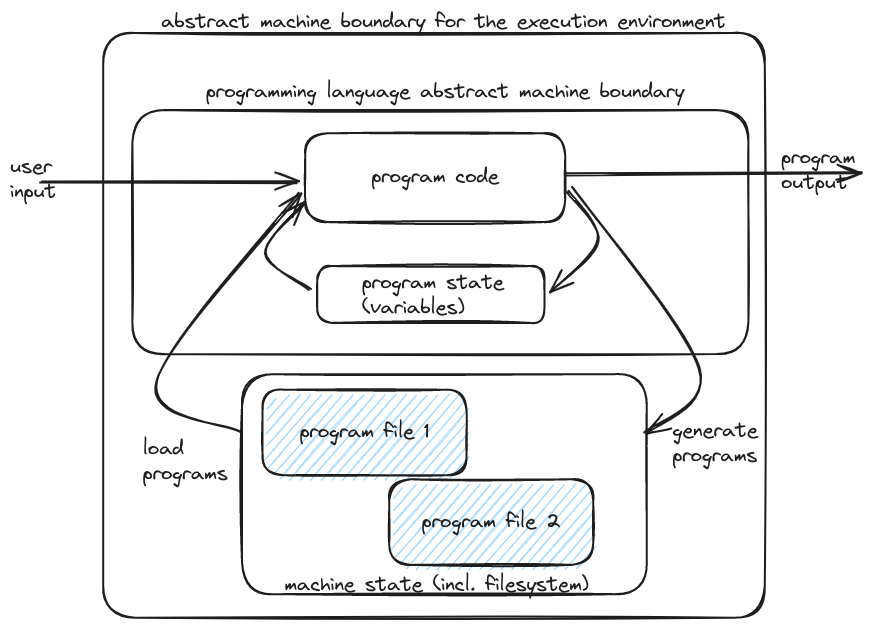

As soon as the problem does not fit this model, the generation starts to fail in interesting ways. In the examples above, we are seeing the effects of layered abstractions, where the program code at one level is part of (writable) machine state at another level:

Based on my experiments so far, it is not yet possible to ask programming assistants to design software systems that exploit the I/O facilities of an inner AMM to perform meta-programming using the facilities of an outer AMM.

The machine does not (yet) think outside of the box.

References

Comments

Interested to discuss? Leave your comments below.